我敢打赌这不是你所认识的kubectl apply!

介绍与

kubectl apply相关的几个概念、相关操作的主要逻辑,主要包括Server Side Apply、Client Side Apply。

一句话概述

kubectl apply 执行时,先读取资源,再将资源更新到 apiserver。

读取资源流程

这里有被谭浩强

i+++++i支配的恐惧(虽然这种用法没有太大的实际意义)

1 | cat staging/src/k8s.io/kubectl/testdata/apply/cm.yaml | kubectl apply \ |

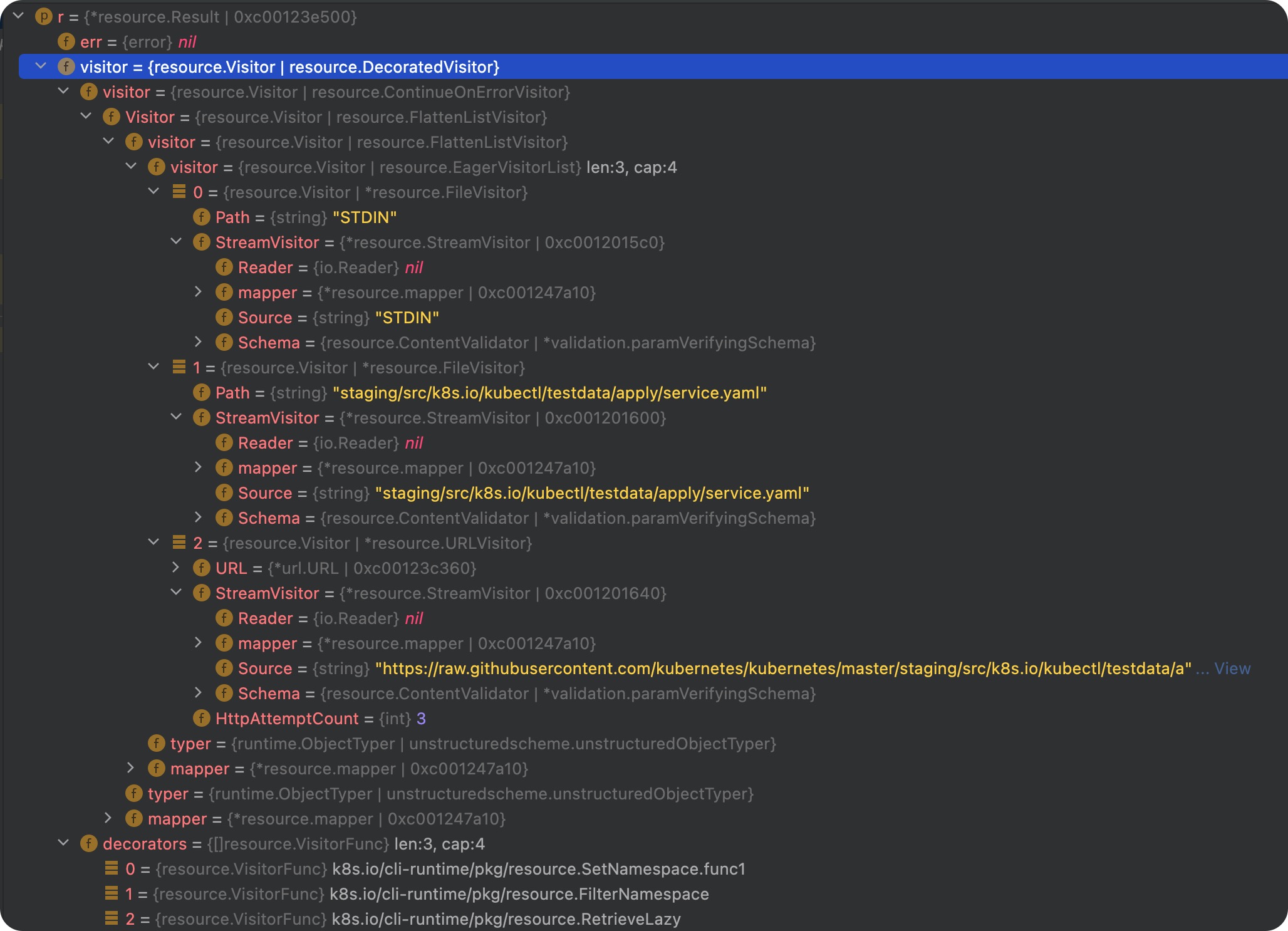

上面从 3 种不同类型的“文件”读取资源,对应源码实现中 3 中不同的 Vistor,这 3 种不同的 Visitor 都可以抽象成一种流,所以他们底层都是依赖于 StreamVisitor。

如果 -f dir,有多少个文件,就会有多少个 FileVisitor。

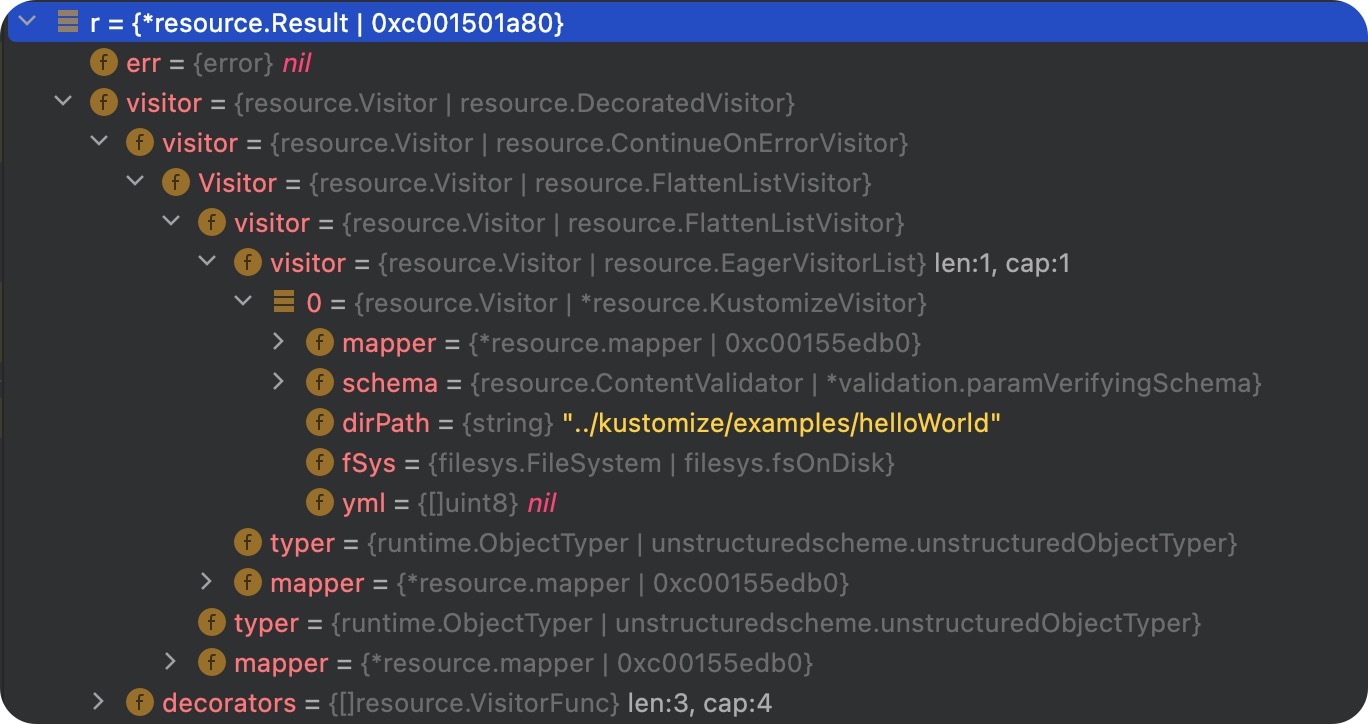

还有一种方式是从 kustomize 目录中读取资源,kustomize 目录类似于 helm,需要先构建后才能获取到 Manifest。使用方式如下:

1 | kubectl apply -k ../kustomize/examples/helloWorld |

对一个 kustomize 类型的资源,会添加一个 KustomizeVistor。它会构建一个默认的 kustomize 命令,然后执行该 kustomize 命令。效果等同于执行了 kustomize build。得到结果后,最终还是会引入一个 StreamVisitor 来读取构建的结果。

流程中其他 Visitor 的作用举例:

- 当有多个资源加载时,一个资源读取报错,不会影响其他资源。

至此,读取到的资源会存放在列表中。

1 | infos, err := o.GetObjects() |

更新资源

在读取到所有的资源之后,会对每个资源进行单独操作,每个资源的操作结果是独立的。

1 | for _, info := range infos { |

至此,apply 流程已经执行完毕,上述代码片段后面是对错误的聚合处理。

现象与问题

用 Lens 工具查看资源 yaml 时,老长的一串

managedFields是啥?用来干啥?💻 可演示 =>one.yaml1

k get deployment nginx-dep-one --show-managed-fields

跑完

kubectl apply之后,annotation 中的kubectl.kubernetes.io/last-applied-configuration是什么?有什么作用?这段 Warning 是什么意思?是否有副作用?💻 可演示 =>

two.yaml1

2Warning: resource deployments/nginx-dep-two is missing the kubectl.kubernetes.io/last-applied-configuration annotation which is required by kubectl apply. kubectl apply should only be used on resources created declaratively by either kubectl create --save-config or kubectl apply. The missing annotation will be patched automatically.

deployment.apps/nginx-dep-two configured有副作用。当 apply 的数据中,有删除字段时,会出现删不掉的问题。

kubectl create -f data/two.yaml- 删除 two.yaml 中的一个 pod

kubectl apply -f data/two.yaml

什么是冲突?💻 可演示 =>

three.yaml1

2

3

4

5

6

7

8

9

10

11

12error: Operation cannot be fulfilled on pods "nginx-dep-7486b598d5-k794w": the object has been modified; please apply your changes to the latest version and try again

Please review the fields above--they currently have other managers. Here

are the ways you can resolve this warning:

* If you intend to manage all of these fields, please re-run the apply

command with the `--force-conflicts` flag.

* If you do not intend to manage all of the fields, please edit your

manifest to remove references to the fields that should keep their

current managers.

* You may co-own fields by updating your manifest to match the existing

value; in this case, you'll become the manager if the other manager(s)

stop managing the field (remove it from their configuration).

See https://kubernetes.io/docs/reference/using-api/server-side-apply/#conflicts- 先以

amanager 创建 svc

kubectl apply -f data/three.yaml --server-side --field-manager=a- 修改 svc 的字段

- 再以

bmanager 修改 svc

kubectl apply -f data/three.yaml --server-side --field-manager=b- 先以

Apply 操作分类

Client Side Apply (默认)

第一次 apply 操作时的请求如下:

1

2

3

4

5

6I0726 14:41:20.148843 73549 loader.go:372] Config loaded from file: /Users/yangyu/.kube/config

I0726 14:41:20.149611 73549 cert_rotation.go:137] Starting client certificate rotation controller

I0726 14:41:20.165326 73549 round_trippers.go:553] GET https://192.168.59.100:8443/openapi/v2?timeout=32s 200 OK in 15 milliseconds

I0726 14:41:20.217376 73549 round_trippers.go:553] GET https://192.168.59.100:8443/apis/apps/v1/namespaces/default/deployments/nginx-dep 404 Not Found in 1 milliseconds

I0726 14:41:20.224037 73549 round_trippers.go:553] POST https://192.168.59.100:8443/apis/apps/v1/namespaces/default/deployments?fieldManager=kubectl-client-side-apply&fieldValidation=Strict 201 Created in 6 milliseconds

deployment.apps/nginx-dep created再次 apply 操作时(无任何修改)的请求如下:

1

2

3

4

5I0726 14:42:15.636114 73739 loader.go:372] Config loaded from file: /Users/yangyu/.kube/config

I0726 14:42:15.641428 73739 cert_rotation.go:137] Starting client certificate rotation controller

I0726 14:42:15.676334 73739 round_trippers.go:553] GET https://192.168.59.100:8443/openapi/v2?timeout=32s 200 OK in 32 milliseconds

I0726 14:42:15.780602 73739 round_trippers.go:553] GET https://192.168.59.100:8443/apis/apps/v1/namespaces/default/deployments/nginx-dep 200 OK in 6 milliseconds

deployment.apps/nginx-dep unchanged再次 apply 操作时(无任何修改)的请求如下:

1

2

3

4

5

6I0726 14:43:12.579529 73954 loader.go:372] Config loaded from file: /Users/yangyu/.kube/config

I0726 14:43:12.582585 73954 cert_rotation.go:137] Starting client certificate rotation controller

I0726 14:43:12.605987 73954 round_trippers.go:553] GET https://192.168.59.100:8443/openapi/v2?timeout=32s 200 OK in 21 milliseconds

I0726 14:43:12.663763 73954 round_trippers.go:553] GET https://192.168.59.100:8443/apis/apps/v1/namespaces/default/deployments/nginx-dep 200 OK in 2 milliseconds

I0726 14:43:12.671397 73954 round_trippers.go:553] PATCH https://192.168.59.100:8443/apis/apps/v1/namespaces/default/deployments/nginx-dep?fieldManager=kubectl-client-side-apply&fieldValidation=Strict 200 OK in 6 milliseconds

deployment.apps/nginx-dep configuredServer Side Apply (通过

--server-side=true开启)请求如下:

1

2

3

4I0726 14:38:39.703841 72954 loader.go:372] Config loaded from file: /Users/yangyu/.kube/config

I0726 14:38:39.704461 72954 cert_rotation.go:137] Starting client certificate rotation controller

I0726 14:38:39.726918 72954 round_trippers.go:553] GET https://192.168.59.100:8443/openapi/v2?timeout=32s 200 OK in 22 milliseconds

I0726 14:38:39.796641 72954 round_trippers.go:553] PATCH https://192.168.59.100:8443/api/v1/namespaces/default/pods/nginx-dep-7486b598d5-k794w?fieldManager=kubectl&fieldValidation=Strict&force=false 409 Conflict in 8 milliseconds

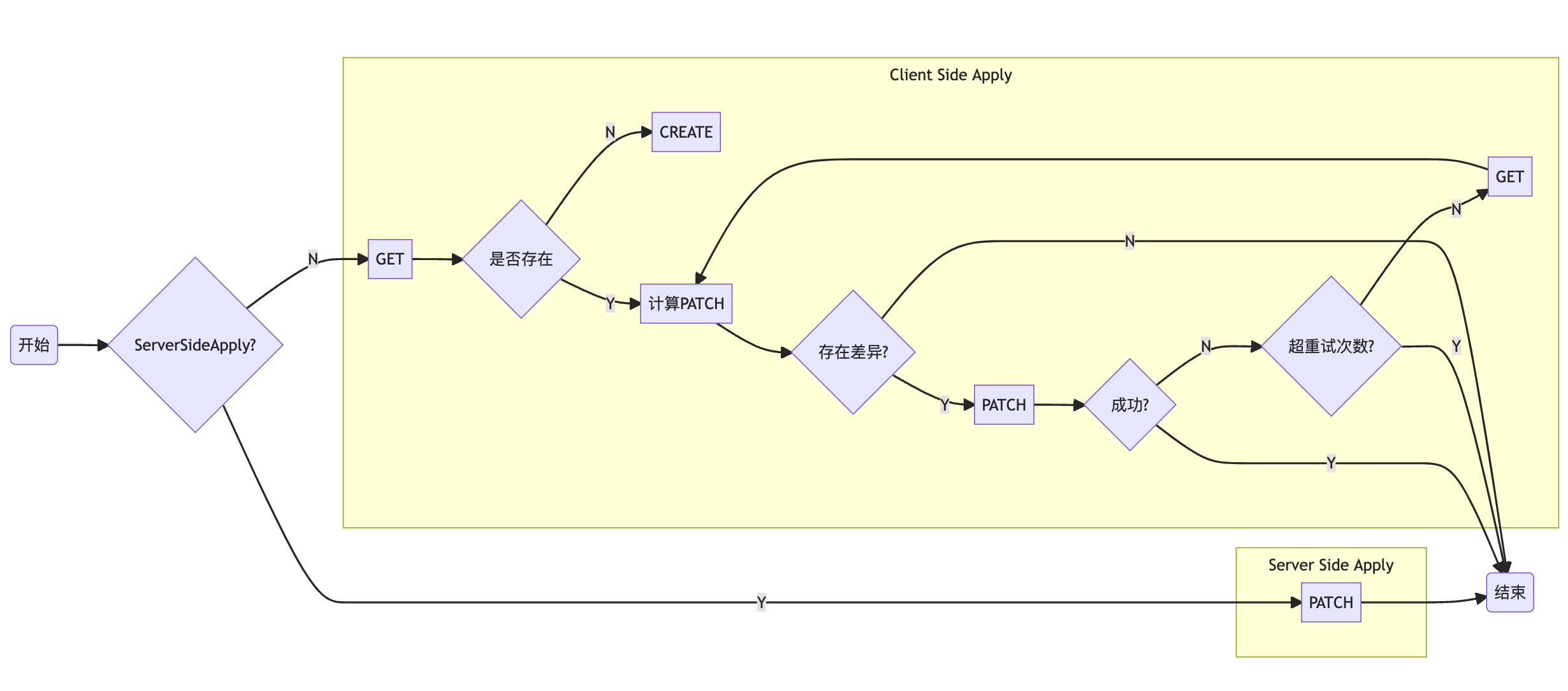

当读取到资源后,apply 操作后续的代码逻辑流程示意图如下:

对 client side apply,当 patch 处理失败时,会进行 5 次重试。

server side apply 的特点:

- 资源中的每个字段都会有一个归属 manager,当不同的 manager 来操作同一个字段时,会提示冲突;

- 逻辑都在服务端,与客户端的网络无关;

先看 kubectl patch 怎么执行

1 | I0726 15:02:01.540175 77947 loader.go:372] Config loaded from file: /Users/yangyu/.kube/config |

GET 请求用来获取原资源,主要有以下两个作用:

和 PATCH 请求完了之后得到的修改了之后的对象做对比,看是否有 patch 发生;

用来做 client 端 dry-run。

这里相当于只发了一个 PATCH 请求。

kubectl patch 中支持的 patch 方式

- Strategic Merge Patch(SMP)

--type=strategic默认 - JSON Merge Patch

--type=merge - JSON Patch

--type=json

其他

- 三者的使用姿势和区别 =》 JSON Patch and JSON Merge Patch

- SMP 效果演示

- 💻可演示 =》

four.yamlkubectl apply -f data/four.yamlkubectl patch deployment nginx-dep-four --type strategic --patch-file data/four-patch.yaml- 看现象

- 原因: kubernetes/types.go at master · kubernetes/kubernetes (github.com)

- 💻可演示 =》

回到 apply 操作

对第二次及后续 apply 操作

既然 apply 操作最终会调用 PATCH 接口,那么 “patch.yaml“ 该怎么来?在获取

ThreeWayMergePatch

通过 3WayMergePatch 计算出变更的内容,然后再调用 PATCH 接口,默认使用 Strategic Merge Patch(对找不到 schema 的资源,使用 JSON Merge Patch)。它的定义如下:

1 | func CreateThreeWayMergePatch(original, modified, current []byte,,,) ([]byte, error) |

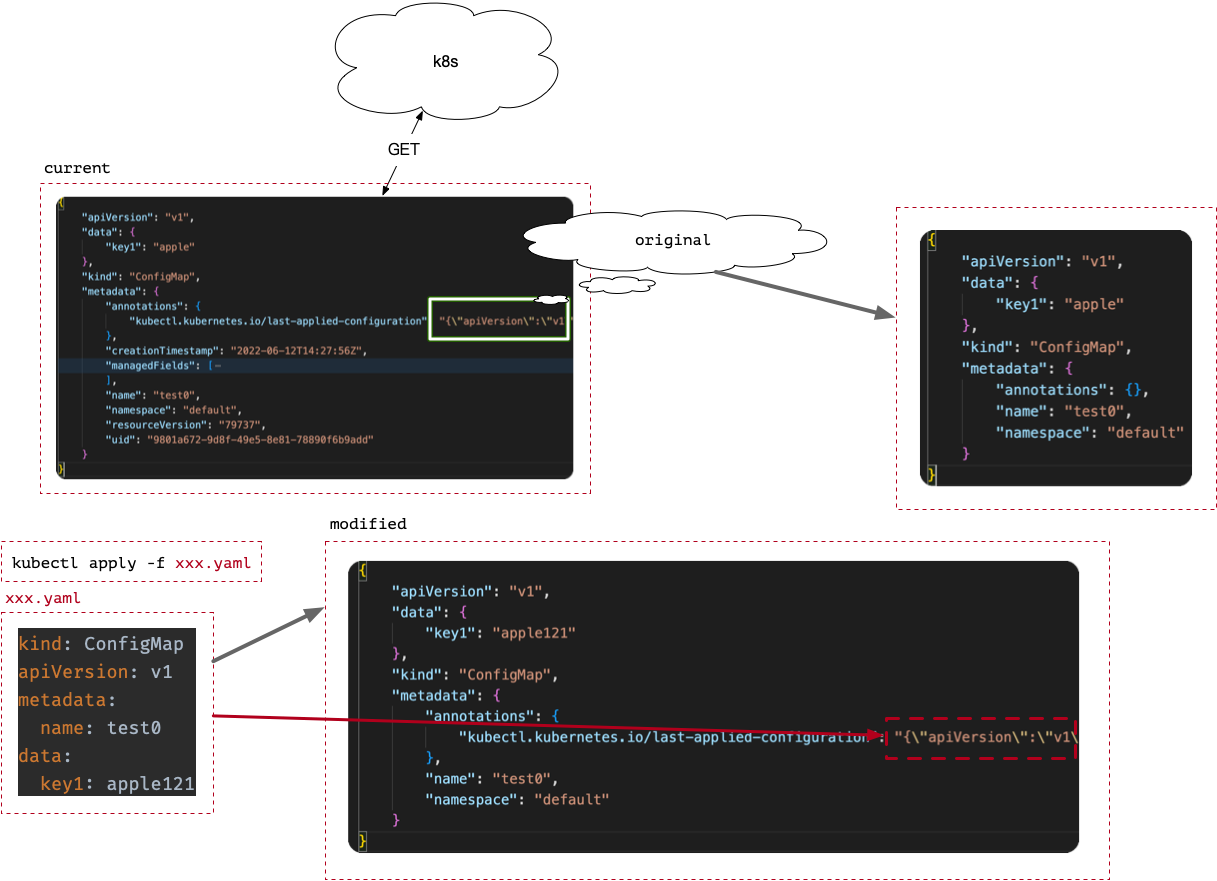

其中 original, modified, current 的含义如下所示:

current当前资源在 k8s 中的状态,即通过 GET 请求从 k8s 中获取到的内容;original是currentannotation 中kubectl.kubernetes.io/last-applied-configuration中的值,即之前 apply 操作时塞进去的内容;modified是本次 apply 操作时,从“文件”中读取到的内容,再加上一个键为kubectl.kubernetes.io/last-applied-configuration的 annotation。

接下来会计算 3 组数据,分别为

deltaMap:current与modified之间的差异(除去deletion操作),找出值发生变化的字段;1

2

3

4

5deltaMapDiffOptions := DiffOptions{

IgnoreDeletions: true,

SetElementOrder: true,

}

deltaMap, err := diffMaps(currentMap, modifiedMap, schema, deltaMapDiffOptions)deletionsMap:original与modified之间的deletions操作,找出删除的字段;1

2

3

4

5deletionsMapDiffOptions := DiffOptions{

SetElementOrder: true,

IgnoreChangesAndAdditions: true,

}

deletionsMap, err := diffMaps(originalMap, modifiedMap, schema, deletionsMapDiffOptions)为什么只能是

original与modified?current中可能存在自动添加的默认值字段,如果与modified进行对比的话,会导致自动添加的字段被标记为删除字段。此时再回过头看前面的问题。

patchMap:deltaMap和deletionsMap执行merge操作后得到patchMap。1

2mergeOptions := MergeOptions{}

patchMap, err := mergeMap(deletionsMap, deltaMap, schema, mergeOptions)

最终返回 json.Marshal(patchMap)。至此计算 patch 结束。

调用 PATCH 接口

得到 patch 数据后,调用 PATCH 接口,完成 apply 操作。

一个小问题

在 Update API Objects in Place Using kubectl patch | Kubernetes 这篇文档中,最后一句 Note 说:

Patch 时使用的 SMP 操作不支持 CR。

原因是什么?

附录

- one.yaml

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-dep-one

labels:

app: nginx-dep-one

spec:

replicas: 1

selector:

matchLabels:

app: nginx-one

template:

metadata:

labels:

app: nginx-one

spec:

containers:

- name: nginx

image: nginx:1.14.2

ports:

- containerPort: 800 - two.yaml

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-dep-two

labels:

app: nginx-two

spec:

replicas: 1

selector:

matchLabels:

app: nginx-two

template:

metadata:

labels:

app: nginx-two

spec:

containers:

- name: nginx

image: nginx:latest

ports:

- containerPort: 80

- name: tomcat

image: tomcat:latest

ports:

- containerPort: 8080 - three.yaml

1

2

3

4

5

6

7

8

9

10

11apiVersion: v1

kind: Service

metadata:

name: three-svc

labels:

name: three-svc

spec:

ports:

- port: 80

selector:

name: test-rc - four.yaml

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-dep-four

labels:

app: nginx-four

spec:

replicas: 1

selector:

matchLabels:

app: nginx-four

template:

metadata:

labels:

app: nginx-four

spec:

tolerations:

- key: "key1"

operator: "Equal"

value: "value1"

effect: "NoSchedule"

- key: "key2"

operator: "Equal"

value: "value2"

effect: "NoSchedule"

containers:

- name: nginx

image: nginx:1.14.2

ports:

- containerPort: 800

- name: tomcat

image: tomcat:latest

ports:

- containerPort: 8080 - four-patch.yaml

1

2

3

4

5

6

7

8

9

10

11

12

13spec:

template:

spec:

tolerations:

- key: "key3"

operator: "Equal"

value: "value3"

effect: "NoSchedule"

containers:

- name: redis

image: redis:latest

ports:

- containerPort: 6379

我敢打赌这不是你所认识的kubectl apply!